As a user, when signing up to a preview of a product you’ll likely receive a very generic thank you message, a mailchimp confirmation or nothing at all. When a company does something different it stands out and users generally notice.

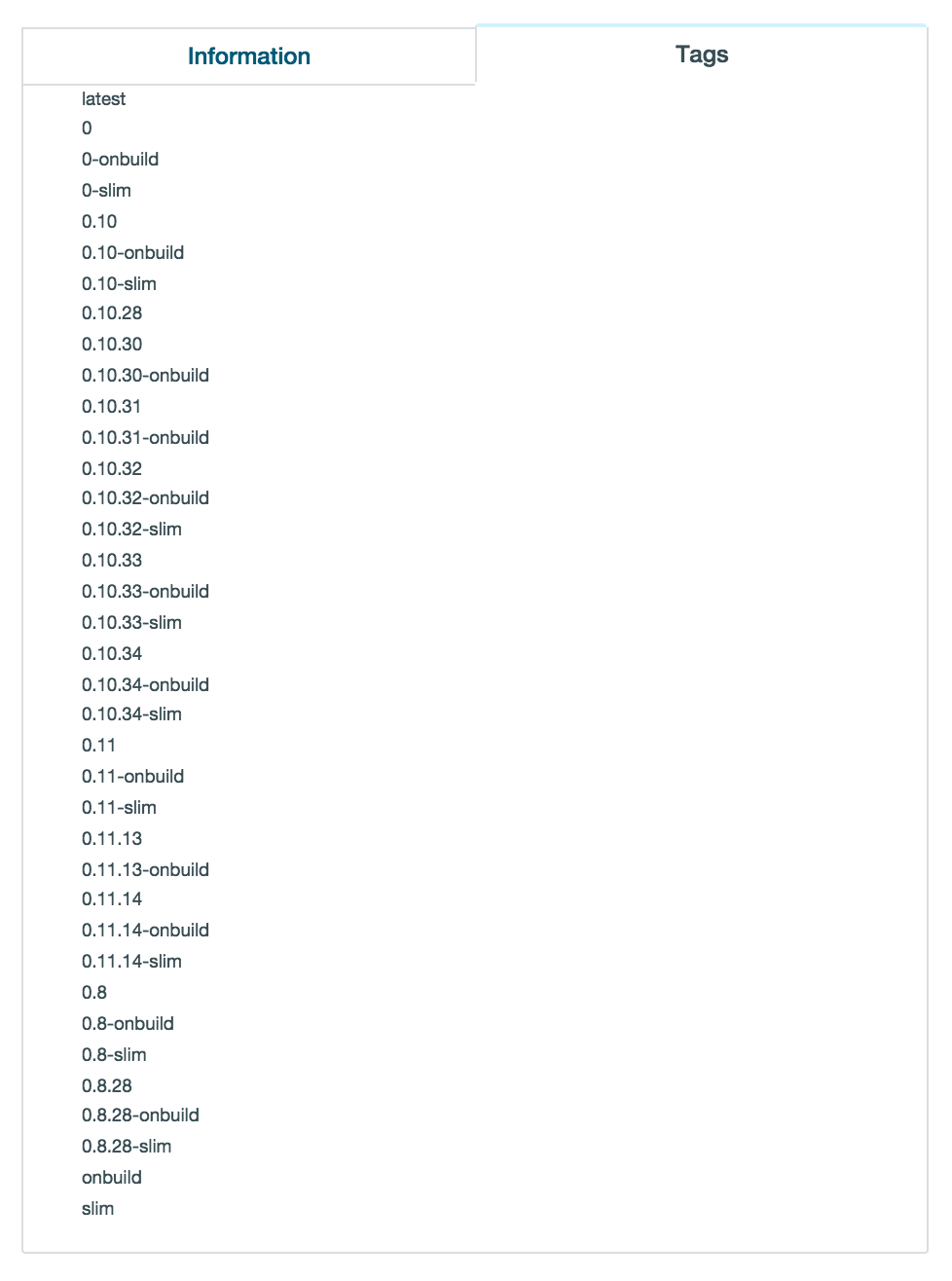

To use crashlytics I needed to join the Fabric developer programme, a cross-platform mobile development suite from Twitter that includes a number of modules and tools to help with the application development lifecycle. Crashlytics is designed around crash reporting and alerts.

After joining the programme I received the standard email saying I’m on the list. Nothing to see here.

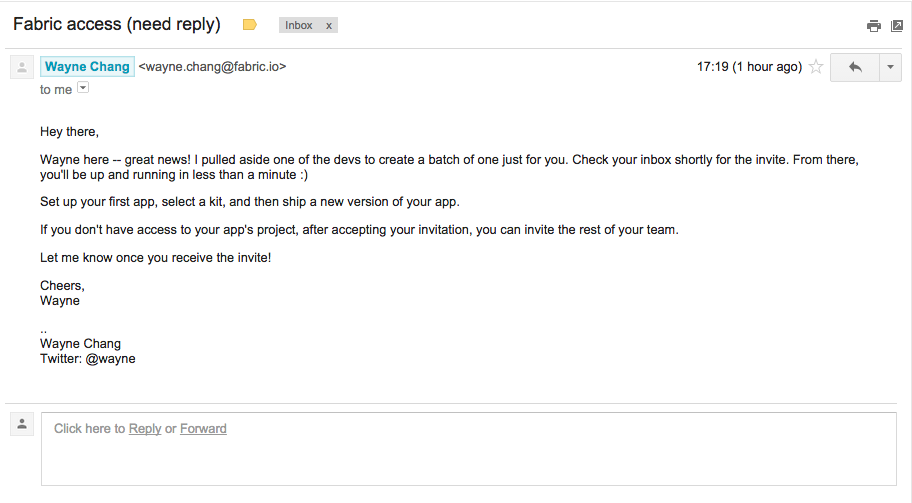

After 9 minutes a second email arrived. Enough time had passed that it could be personal and not automated, unlikely but I still like to believe.

A couple of items instantly stood out from the email.

1) Firstly the subject “Fabric access (need reply)”. 10 minutes ago I was told I was on the list, now I receive an email about my access but required a reply. It sparked my interest enough to open it.

2) The opening paragraph states the founder “pulled aside one of the devs to create a batch of one just for you.” – Instantly giving the user special treatment and making them feel important. I don’t believe this happened but there is still a positive feeling attached to the statement and the company as a whole. It’s a nice touch.

3) “Check your inbox shortly for the invite” – This keeps me engaged and the product at the front of my mind. It also starts to build the anticipation that I might be joining something special.

4) “Let me know once you receive the invite!” – A great way to engage with users and start the conversation. It doesn’t ask about first experiences or only get in touch if you need something both of which cause the user to think. It would be really interesting to see if this sparks conversations and what questions also are attached with the initial email.

A few moments later an invite code arrived and I signed up instantly. Sadly, I didn’t let Wayne know, sorry Wayne.