One of the new features within SQL Server 2008 is Declarative Management Framework, a new policy based management framework allowing you to define what can or cannot be done on the server itself which can then be redistributed across your network of servers for more consistent management. Content based on the June CTP.

Three main components make up the framework

- Policy management. Create the policies from within SQL Management Studio.

- Explicit administration. Select and manage targets for the policies.

- Automated administration. How and when the policies are evaluated.

Some of the important terminology includes:

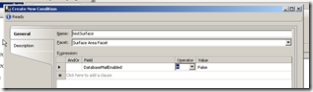

Facet. Set of logical properties that model behaviour or characteristics. There are a number of facets built into the server, each of these have a set of properties for which you can set a condition on based on what the policy is. For example, there is a Surface Area Facet which allows you to set if a setting should be on or off.

Facet. Set of logical properties that model behaviour or characteristics. There are a number of facets built into the server, each of these have a set of properties for which you can set a condition on based on what the policy is. For example, there is a Surface Area Facet which allows you to set if a setting should be on or off. - Condition. Expression of allowed states.

- Policy. Condition and the expected behaviour.

- Policy group. Group to help manage policies. A policy can only belong to one group.

- Execution Mode. How the policy will be executed.

What is a Facet again?

Facet is one of the core concepts within the DMF. They set out all of the properties which can be used as part of the condition/policy without you having to worry about how to locate and access the information. When you create a new condition, you say which facet contains the information you want, and then set a condition to the properties you are interested in.

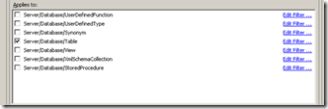

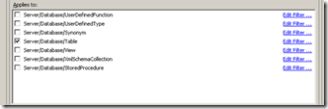

When it comes to creating a policy, the facet plays a part in what the policy can be targeted at. The Multipart Name Facet can be targeted/applied to a number of different objects in the system while the surface area facet only applies at server level. This makes managing the policy a lot similar as you only see the options which you are interested in.

When it comes to creating a policy, the facet plays a part in what the policy can be targeted at. The Multipart Name Facet can be targeted/applied to a number of different objects in the system while the surface area facet only applies at server level. This makes managing the policy a lot similar as you only see the options which you are interested in.

If your unsure on where the property you are interested in is contained within which facet, you can right click on the object > policies > facet. This will display all the related facets with all the properties set to match the selected object. From here, you can also create a policy to define how it should be configured, makes it quick to setup a known baseline and tweak the policy from there.

What and How are they executed

DMF executes the created policies either on an ad-hoc basis, or more automated via Check on Schedule, Check On Changes or Enforce which are set when the policy is created, or changed at a later point. Policies can also be set to disabled/enabled. Policies can also be checked in test mode to make sure they are working correctly. Check On Schedule uses a SQL Server Agent job to evaluate a policy. Check On Changes uses event notification to evaluate based on when changes occur. Enforce uses DDL triggers to prevent policy violations. Notice, the first two do not prevent, just notify, while Enforce prevents the changes taking place.

Dan has done a really good write up on the details of enforce at Declarative Management- Behind Enforce. To summarise the main points. Enforce works off a public DDL trigger which listens for all server and database events. This is a single trigger and is updated with the events required which is based on the active polices, which in turn is based on the facet. The DM engine runs inside the SQLCLR, however it runs even if SQLCLR is set to Off as the Off mode still allows assemblies signed by Microsoft to execute. An interesting point is that the DM is dependent on the DDL eventing and so can only enforce state on objects that are transactions. If it is not a transaction, then it is not allowed to be enforce (option will be disabled) as it cannot be cleanly rollbacked if it fails.

How do you create a policy?

When creating a policy, the first thing you need to do is create a condition. This is all built into SQL Management Studio, within the Object Explorer, under Management there is a new tree item for Policy Management. This contains all of the information relating to the facets, conditions and policies related to the connected server.

To create a new condition, right click Conditions or a Facet to display the create dialog, you will need to give it a name, choose the related Facet and then set the conditions. You can do some really powerful expressions, however I do not think there is a way to do cross two facets. Maybe giving this as a SQL statement would allow more flexibility.

Once the condition has been created, you can then attach a policy to that condition. Right click Policies and select new policy. You will need to give it a name, select the condition you just created, then select what the policy applies to, finally selecting the execution mode. That’s it, the policy is now setup to manage the server. A policy can only have one related condition, however you can have a group of policies.

As already mentioned, you can create a condition and related policy from an existing database object.

Where are they stored?

Policies are stored in the msdb database but also can be exported as a not very interesting XML file. They can be accessed via views, or via SQL Management Studio.

Bug

There is currently a known bug in the CTP which means the DMF does not work. As mentioned, when the CLR is off only Microsoft signed assemblies can be executed, however the Declarative Management DLL did not get signed as part of the build process. When a policy is executed, it causes the following error:

Msg 10314, Level 16, State 11, Procedure sp_syspolicy_execute_policy, Line 25

An error occurred in the Microsoft .NET Framework while trying to load assembly id 65536. The server may be running out of resources, or the assembly may not be trusted with PERMISSION_SET = EXTERNAL_ACCESS or UNSAFE. Run the query again, or check documentation to see how to solve the assembly trust issues. For more information about this error:

System.IO.FileLoadException: Could not load file or assembly ‘Microsoft.SqlServer.DmfSqlClrWrapper, Version=10.0.0.0, Culture=neutral, PublicKeyToken=89845dcd8080cc91’ or one of its dependencies. Strong name validation failed. (Exception from HRESULT: 0x8013141A) —> System.Security.SecurityException: Strong name validation failed. (Exception from HRESULT: 0x8013141A)

System.Security.SecurityException:

You need to enter the following command and restart services to allow SQL to execute non-signed/strong named assemblies. The ‘89845DCD8080CC91’ string is the Public Key Token for the assembly in case you was wondering.

“%ProgramFiles%Microsoft Visual Studio 8SDKv2.0Binsn.exe” -Vr Microsoft.SqlServer.DmfSqlClrWrapper,89845DCD8080CC91

Summary

The Declarative Management Framework is an excellent addition to the SQL Server toolset and I can see a lot of benefit for DBAs, no more going around 100 servers to check that the CLR is enabled, instead they can just execute an ad-hoc script or enforce it by default.

Dan Jones, who works on the SQL team, has lots of interesting information about this on his blog.

http://blogs.msdn.com/dtjones/default.aspx

SQL Books online:

ms-help://MS.SQLCC.v10/MS.SQLSVR.v10.en/s10de_4deptrbl/html/ef2a7b3b-614b-405d-a04a-2464a019df40.htm